preprint version because scihub doesn't have it yet https://www.ncbi.nlm.nih.gov/pmc/articles/PMC10120732/

Abstract

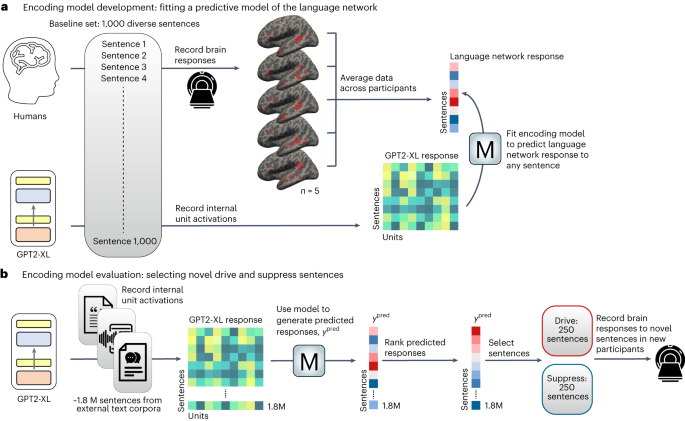

Transformer models such as GPT generate human-like language and are predictive of human brain responses to language. Here, using functional-MRI-measured brain responses to 1,000 diverse sentences, we first show that a GPT-based encoding model can predict the magnitude of the brain response associated with each sentence. We then use the model to identify new sentences that are predicted to drive or suppress responses in the human language network. We show that these model-selected novel sentences indeed strongly drive and suppress the activity of human language areas in new individuals. A systematic analysis of the model-selected sentences reveals that surprisal and well-formedness of linguistic input are key determinants of response strength in the language network. These results establish the ability of neural network models to not only mimic human language but also non-invasively control neural activity in higher-level cortical areas, such as the language network.

Study seems neat, but I feel like “non-invariably control neural activity” is quite the up-sell. Like, couldnt any behaviour that elicits some kind of response from another person “non-invasively control neural activity”?

If I make someone flinch by pretending to punch them, am I doing non-invasive neural activity?

It’s still cool (and scary) that they used LLM’s and other data science to automate creating sentences that trigger specific neural responses. Surely this won’t be used for more horrors

probably, but not necessarily in such a targeted way, without developing a similar dataset and model that associates fmri inferred activity with the modality of the stimulus you want to present to the subject

advertisers right now:

I'm neither a linguist nor a neuroscientist, but it sounds like "non-invasively control neural activity in higher-level cortical areas" may be bazinga-speak for "people react to LLM babble the same way they react to actual people saying things".

it's a bit stronger than that in the sense that the model that the authors developed can aim at particular regions of the language processing network and stimulate or suppress activity in those regions specifically