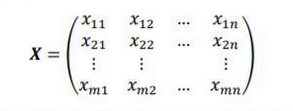

MATLAB is for matrix calcs. Matrix indices start at 1, fight me. Given a matrix X of m x n size, you write

Show

Matlab has many issues, amongst other accessibility (which can be remedied by piracy), closed-software, but as a program designed to do computational matrix manipulation, starting at index 1 is literally correct. This is how you learn matrix indices in intro linear algebra. How is it make sense then you use a software to assist computation and start indexing at 0, while you write the equations and indices on a piece of paper you start at 1. CS majors go home.

Is there a reason for the convention other than that's how most people count? (Which is a perfectly fine reason, I'm just curious)

When you say the first element of a matrix, first implies one and not zero. This is how linear algebra was invented (on paper, by a human mathematician), taught, and passed down to fellow humans.

Starting indexes at zero stem from the lineage of C programming and binary nature of computer. For example,

Computer memory addresses have 2^N cells addressed by N bits. Now if we start counting at 1, 2^N cells would need N+1 address lines. The extra-bit is needed to access exactly 1 address. (1000 in the above case.). Another way to solve it would be to leave the last address inaccessible, and use N address lines.

This is why, math and physics people who learn linear algebra and matrix calculus learn to index at 1 (on a piece of paper) while computer science programmers index at 0.

Is linear algebra older than 0? Hang on (no, it is not, formalised in 17th century)

In my CS course, at least, it was treated as "engineering", so we did both linear algebra and C programming. For everyone counting from 1 was more natural and the C method had to be taught a few times throughout the course (starting with java loops, which wasn't used for malloc, OOP was probably the first unit anyone did for CS). As a habit it tended to stick even where we didn't really use it (or in languages that don't, e.g. lua), given how grueling C programming was and the other languages that were downstream of it.

I guess you could analogise things like saying "17th century" is 1600-1699 (first century is 0001 to 0099, I guess), in CS you are counting the very start of a thing (e.g. how many apple-widths to get to the first apple), vs the more common how many apples to have gotten the first apple. Or something, idk,

I'm drunk and avoiding housework, sorry

There is no general convention in mathematics and linear algebra to index from 1. It highly depends on the department and person and it’s becoming more common to index from 0.

This is the first time that I see this meme and I'm on the friend group B side, now I know how it feels.